TheDocumentation Index

Fetch the complete documentation index at: https://developer.watson-orchestrate.ibm.com/llms.txt

Use this file to discover all available pages before exploring further.

quick-eval command provides a fast, reference-less evaluation of your agents and tools.

Note:For now, you can use only Python tools.

evaluate command, it does not require ground truth datasets. Instead, it runs a lightweight check to identify common issues such as schema mismatches and hallucinations in tool calls.

BASH

BASH

config.yaml

Flags

Flags

Path to the configuration file with details about the evaluation settings.

Comma-separated list of test files or directories containing ground truth datasets. Required when not using a configuration file.

Directory containing tool definitions.

Directory where evaluation results will be saved. Required when not using a config file.

Path to the

.env file that overrides the default environment.Understanding the Summary Metrics Table

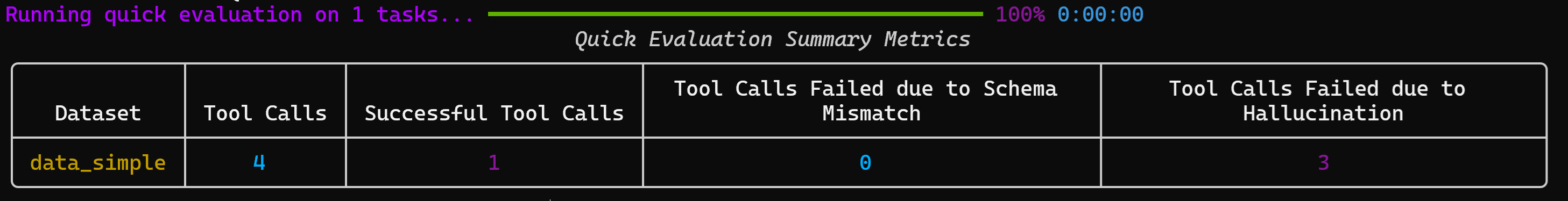

At the end of the evaluation, you will see a summary similar to the following one:

Metrics explained

Quick Evaluation Summary Metrics| Metric | Description | Calculation / Type |

|---|---|---|

| Dataset | Name of the dataset used for quick evaluation | Text |

| Tool Calls | Total number of tool calls attempted during the evaluation | Integer (≥ 0) |

| Successful Tool Calls | Number of tool calls that executed successfully without errors | Integer (≥ 0) |

| Tool Calls Failed due to Schema Mismatch | Number of tool calls that failed because the input/output schema did not match expectations | Integer (≥ 0) |

| Tool Calls Failed due to Hallucination | Number of tool calls that failed because the agent invoked tools that were irrelevant or non-existent | Integer (≥ 0) |

If the value is equal to 1.0 or

True, the table omits the result.